| Label | Explanation | Data Type |

Input Features

| The input point feature class that will be aggregated into space-time bins. | Feature Layer |

Output Space Time Cube

| The output netCDF data cube that will be created to contain counts and summaries of the input feature point data. | File |

Time Field

|

The field containing the date and time (timestamp) for each point. This field must be of type date. | Field |

Template Cube (Optional) | A reference space-time cube that will be used to define the Output Space Time Cube parameter extent of the analysis, bin dimensions, and bin alignment. The Time Step Interval, Distance Interval, and Reference Time parameter values are also obtained from the template cube. This template cube must be a netCDF (.nc file) that was created using this tool. A space-time cube created by aggregating into the Defined locations parameter value cannot be used as a template cube. | File |

Time Step Interval

(Optional) | The number of seconds, minutes, hours, days, weeks, or years that will represent a single time step. All points within the same Time Step Interval and Distance Interval parameter values will be aggregated. When a Template Cube parameter value is provided, this parameter is inactive, and the Time Step Interval value is obtained from the template cube. | Time Unit |

Time Step Alignment

(Optional) | Specifies how aggregation will occur based on a provided Time Step Interval parameter value. If a Template Cube parameter value is provided, the time-step alignment associated with the Template Cube value overrides this parameter setting, and the time-step alignment of the Template Cube value will be used.

| String |

Reference Time

(Optional) | The date and time that will be used to align the time-step intervals. If you want to bin the data weekly from Monday to Sunday, for example, set a reference time of Sunday at midnight to ensure that bins break between Sunday and Monday at midnight. When a Template Cube parameter value is provided, this parameter is inactive and the reference time will be based on the Template Cube value. | Date |

Distance Interval

(Optional) | The size of the bins that will be used to aggregate the Input Features parameter value. All points that fall within the same Distance Interval and Time Step Interval parameter values will be aggregated. When aggregating into a hexagon grid, this distance is used as the height to construct the hexagon polygons. When a Template Cube parameter value is provided, this parameter is inactive and the distance interval value will be based on the Template Cube value. | Linear Unit |

Summary Fields

| The numeric field containing attribute values that will be used to calculate the specified statistic when aggregating into a space-time cube. Multiple statistic and field combinations can be specified. Null values in any of the fields specified will result in that feature being dropped from the output cube. If there are null values in the input features, it is recommended that you run the Fill Missing Values tool before creating a space-time cube. The available statistic types are as follows:

The available fill types are as follows:

Note:Null values in any of the summary field records will result in those features being excluded from the output cube. If there are null values in the Input Features parameter value, it is recommended that you run the Fill Missing Values tool first. If, after running the Fill Missing Values tool, there are still null values present and the count of points in each bin is part of your analysis strategy, you can create separate cubes, one for the count (without a Summary Fields parameter value) and one for the Summary Fields value. If the set of null values is different for each summary field, you can also create a separate cube for each summary field. | Value Table |

Aggregation Shape Type

(Optional) | Specifies the shape of the polygon mesh into which the input feature point data will be aggregated.

| String |

Defined Polygon Locations

(Optional) | The polygon features into which the input point data will be aggregated. These can represent county boundaries, police beats, or sales territories, for example. | Feature Layer |

Location ID

(Optional) | The numeric field containing the ID number for each unique location. | Field |

Summary

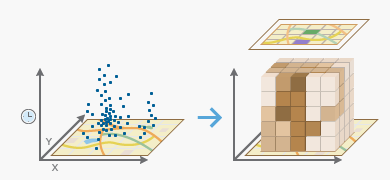

Summarizes a set of points into a netCDF data structure by aggregating them into space-time bins. Within each bin, the points are counted, and specified attributes are aggregated. For all bin locations, the trend for counts and summary field values are evaluated.

Learn more about how Create Space Time Cube By Aggregating Points works

Illustration

Usage

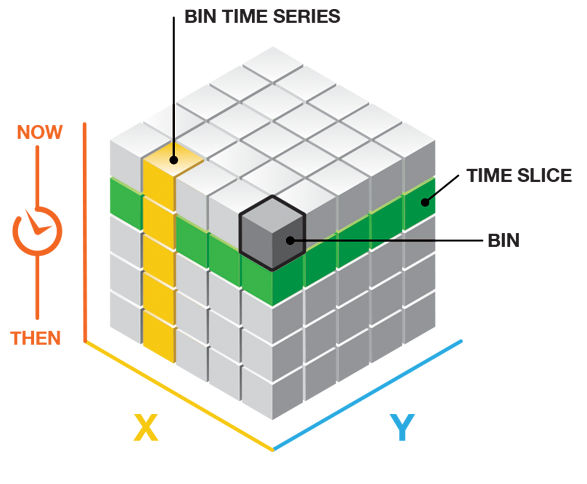

This tool aggregates point input features into space-time bins. The data structure it creates can be thought of as a three-dimensional cube composed of space-time bins with the x and y dimensions representing space and the t dimension representing time.

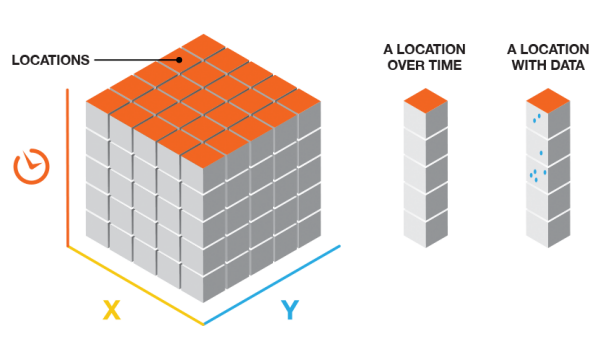

Every bin has a fixed position in space (x,y) and in time (t). Bins covering the same (x,y) area share the same location ID. Bins encompassing the same duration share the same time-step ID.

Each bin in the space-time cube has a LOCATION_ID, time_step_ID, and COUNT field value, as well as values for any fields specified in the Summary Fields parameter that were aggregated when the cube was created. Bins associated with the same physical location will share the same location ID and together will represent a time series. Bins associated with the same time-step interval will share the same time-step ID and together will comprise a time slice. The count value for each bin reflects the number of points that occurred at the associated location within the associated time-step interval.

The Input Features parameter value should be points, for example, crime or fire events, disease incidents, customer sales data, or traffic accidents. Each point should have a date associated with it. The field containing the event timestamp must be of type date. The tool requires a minimum of 60 points and a variety of timestamps. The tool will fail if the parameters specified result in a cube with more than 2 billion bins.

This tool requires projected data to accurately measure distances.

Output from this tool is a netCDF representation of the input points as well as messages summarizing cube characteristics. Messages are written at the bottom of the Geoprocessing pane during tool operation. To access the messages, hover over the progress bar, and click the pop-out button

, or expand the messages section in the Geoprocessing pane. You can also access the messages for a previous run of the tool in the geoprocessing history. You can use the netCDF file as input to other tools such as the Emerging Hot Spot Analysis tool or the Local Outlier Analysis tool. See Visualizing the Space Time Cube for strategies to view cube contents.

, or expand the messages section in the Geoprocessing pane. You can also access the messages for a previous run of the tool in the geoprocessing history. You can use the netCDF file as input to other tools such as the Emerging Hot Spot Analysis tool or the Local Outlier Analysis tool. See Visualizing the Space Time Cube for strategies to view cube contents.Select a field of type date for the Time Field parameter. This field should contain the timestamp associated with each point feature. If the field is high precision (containing millisecond values), the timestamp of each space-time bin will include seconds only, and any milliseconds will be ignored.

The Time Step Interval parameter specifies how the aggregated points will be partitioned across time. You can aggregate points using one-day, one-week, or one-year intervals for example. Time-step intervals are always fixed durations, and the tool requires a minimum of 10 time steps. If you do not provide a Time Step Interval value, the tool will calculate one. See Learn more about how the Create Space Time Cube By Aggregating Points tool works for details about how default time-step intervals are computed. Valid time-step interval units are years, months, days, hours, minutes, and seconds.

Note:

A number of time units appear in the Time Step Interval drop-down list; however, the tool only supports Years, Months, Weeks, Days, Hours, Minutes, and Seconds.

If the space-time cube could not be created, the tool may have been unable to structure the input data you provided into 10 time-step intervals. If you receive an error message running this tool, examine the timestamps of the input points to ensure that they include a range of values. The range of values must span at least 10 seconds, as this is the smallest time increment that the tool supports. Ten time-step intervals are required by the Mann-Kendall statistic.

When creating a space-time cube with incident data, depending on the Time Step Interval value that you specify, it is possible to create a bin at the beginning or end of the cube that does not have data across the entire span of time. For example, if you specify 1 month as the Time Step Interval value, and the data does not break up evenly into 1-month intervals, there will be a time step at either the beginning or end that does not have data over its entire span. This can bias the results because it will appear that the temporally biased time step has significantly fewer points than other time steps, which is an artificial result of the aggregation scheme. The messages indicate whether there is temporal bias in the first or last time step. One solution is to create a selection set of the data so that it does fall evenly within the specified Time Step Interval value.

It is not uncommon for a dataset to have a regularly spaced temporal distribution. For example, you might have yearly data that all falls on January 1 of each year, or monthly data that is all timestamped the first of each month. This type of data is often referred to as panel data. With panel data, temporal bias calculations will often show very high percentages. This is to be expected, as each bin will only cover one particular time unit in the given time step. For example, if you specify 1 year as the Time Step Interval value, and the data fell on January 1 of each year, each bin would only cover one day out of the year. This is acceptable since it applies to each bin. Temporal bias becomes an issue when it is only present for certain bins due to bin creation parameters rather than true data distribution. It is important to evaluate the temporal bias in terms of the expected coverage in each bin based on the data's distribution.

The temporal bias in the output report is calculated as the percentage of the time span that has no data present. For example, an empty bin would have 100 percent temporal bias. A bin with a 1-month time span and an end Time Step Alignment value that only has data for the second two weeks of the first time step would have a 50 percent first time step temporal bias. A bin with a 1-month time span and a start Time Step Alignment value that only has data for the first two weeks of the last time step would have a 50 percent last time step temporal bias.

Once you create a space-time cube, the spatial extent of the cube can never be extended. If further analysis of the space-time cube will involve the use of a study area (such as a Polygon Analysis Mask value for the Emerging Hot Spot Analysis tool), you need to ensure that the Polygon Analysis Mask value does not extend beyond the extent of the Input Features value when you create the cube. Setting the study area polygons that you will use in future analysis as the Extent environment when you create the cube will ensure that the extent of the cube is as large as it needs to be at the beginning of the analysis.

Legacy:

The method that the Create Space Time Cube By Aggregating Points tool uses to create the extent of the space-time cube changed at the releases of ArcGIS Pro 1.3 and ArcMap 10.5. You can learn more about this change in Space-time cube bias adjustment. The new bias adjustment will provide a better result, but if you need to recreate the cube with the previous extent, you can specify the extent through the Extent environment setting.

You can create a value for Template Cube that can be used each time you run the analysis, especially if you want to compare data for a series of time periods. By providing the same template cube, you ensure that the extent of the analysis, bin size, time-step interval, reference time, and time-step alignment are always consistent.

If you provide a Template Cube value, input points that fall outside of the template cube extent will be excluded from analysis. Also, if the spatial reference associated with the input point features is different from the spatial reference associated with the template cube, the tool will project the Input Features value to match the template cube before beginning the aggregation process. The spatial reference associated with the template cube will override the Output Coordinate System environment setting as well. In addition, the Template Cube value, when specified, will determine the processing extent used, even if you specify a different processing extent. See How Creating a Space Time Cube works for more information.

The Reference Time parameter value can be a date and time value or solely a date value; it cannot be solely a time value. The expected format is determined by the computer's regional time settings.

Use the Aggregation Shape Type parameter to specify how the points are aggregated spatially. If you want to aggregate to a regularly shaped grid, you can specify either a fishnet or hexagon shape. While fishnet grids are typically used, hexagons may be a better option for certain analyses. If you have boundaries or locations that make sense for the analysis (such as census blocks or police beats), you can also use those to aggregate using the Defined Locations option.

Note:

If the input features used for the Defined Locations option are stored in a file geodatabase and contain true curves (stored as arcs as opposed to stored with vertices), polygon shapes will be distorted when stored in the space-time cube. To check whether the Defined Locations features contain true curves, run the Check Geometry tool with the Validation Method parameter set to OGC. If you receive an error message stating that the selected option does not support nonlinear segments, true curves exist in the dataset and may be eliminated and replaced with vertices using the Densify tool with the Densification Method parameter set to Angle before creating the space-time cube.

Because a grid cube is always rectangular even if the point data is not, some locations will have point counts of zero for all time steps. For many analyses, only locations with data with at least one point count greater than 1 for at least one time step will be included in the analysis.

-

When creating a cube aggregating into defined locations, all user-provided defined locations will be included, even those that have no points at any time step.

Use the Distance Interval parameter to specify how large the space-time bins will be. The bins are used to aggregate the point data. You can make each fishnet bin 50 meters by 50 meters for example. If you are aggregating into hexagons, the Distance Interval value is the height of each hexagon, and the width of the resulting hexagons will be 2 times the height divided by the square root of 3. Unless a Template Cube parameter value is specified, the bin in the upper-left corner of the cube will be centered on the upper-left corner of the spatial extent for the Input Features parameter value.

- Provide a Distance Interval value that makes sense for the analysis. Find the balance between a distance interval that is too large and loses the underlying patterns in the point data, and a distance interval that is too small so the result is a cube filled with zero counts. If you do not provide a Distance Interval value, the tool will calculate one. See How Create Space Time Cube By Aggregating Points works for details about how default distance intervals are computed. The supported distance interval units are kilometers, meters, miles, and feet.

The trend analysis performed on the aggregated count data and summary field values is based on the Mann-Kendall statistic.

The following statistical operations are available for the aggregation of attributes with this tool: sum, mean, minimum, maximum, standard deviation, and median.

When filling empty bins with the SPATIAL_NEIGHBORS fill type, a Queens Case Contiguity is used (contiguity based on edges and nodes) of the 2nd order (includes neighbors and neighbors of neighbors). A minimum of four spatial neighbors are required to fill the empty bin using this option.

When filling empty bins with the SPACE_TIME_NEIGHBORS fill type, a Queens Case Contiguity is used (contiguity based on edges and nodes) of the 2nd order (includes neighbors and neighbors of neighbors). Additionally, temporal neighbors are used for each of those bins found to be spatial neighbors by going backward and forward two time steps. A minimum of 13 space time neighbors are required to fill the empty bin using this option.

When filling empty bins with the TEMPORAL_TREND fill type, the first two time periods and last two time periods at a given location must have values in their bins to interpolate values at other time periods for that location.

The TEMPORAL_TREND option uses the Interpolated Univariate Spline method in the SciPy Interpolation package.

Null values that are present in any of the summary field records will result in those features being excluded from the output cube. If there are null values present in the Input Features value, it is recommended that you run the Fill Missing Values tool first. If, after running the Fill Missing Values tool, there are still null values present, and the count of points in each bin is part of your analysis strategy, you can create separate cubes, one for the count (without a Summary Fields value) and one with a Summary Fields value. If the set of null values is different for each summary field, you can also create a separate cube for each summary field.

This tool can take advantage of the increased performance available in systems that use multiple CPUs (or multicore CPUs). The tool will default to using 50 percent of the processors available; however, the number of CPUs used can be increased or decreased using the Parallel Processing Factor environment. The increased processing speed is most noticeable when creating larger space-time cubes.

Parameters

arcpy.stpm.CreateSpaceTimeCube(in_features, output_cube, time_field, {template_cube}, {time_step_interval}, {time_step_alignment}, {reference_time}, {distance_interval}, summary_fields, {aggregation_shape_type}, {defined_polygon_locations}, {location_id})| Name | Explanation | Data Type |

in_features | The input point feature class that will be aggregated into space-time bins. | Feature Layer |

output_cube | The output netCDF data cube that will be created to contain counts and summaries of the input feature point data. | File |

time_field |

The field containing the date and time (timestamp) for each point. This field must be of type date. | Field |

template_cube (Optional) | A reference space-time cube that will be used to define the output_cube parameter extent of the analysis, bin dimensions, and bin alignment. The time_step_interval, distance_interval, and reference_time parameter values are also obtained from the template cube. This template cube must be a netCDF (.nc file) that was created using this tool. A space-time cube created by aggregating into the DEFINED_LOCATIONS parameter value cannot be used as a template cube. | File |

time_step_interval (Optional) | The number of seconds, minutes, hours, days, weeks, or years that will represent a single time step. All points within the same time_step_interval and distance_interval parameter values will be aggregated. When a template_cube parameter value is provided, this parameter is ignored, and the time_step_interval value is obtained from the template cube. Examples of valid values for this parameter are 1 Weeks, 13 Days, or 1 Months. | Time Unit |

time_step_alignment (Optional) | Specifies how aggregation will occur based on a provided time_step_interval parameter value. If a template_cube parameter value is provided, the time-step alignment associated with the template_cube value overrides this parameter setting, and the time-step alignment of the template_cube value will be used.

| String |

reference_time (Optional) | The date and time that will be used to align the time-step intervals. If you want to bin the data weekly from Monday to Sunday, for example, set a reference time of Sunday at midnight to ensure that bins break between Sunday and Monday at midnight. When a template_cube parameter value is provided, this parameter is ignored and the reference time will be based on the template_cube value. | Date |

distance_interval (Optional) | The size of the bins that will be used to aggregate the in_features parameter value. All points that fall within the same distance_interval and time_step_interval parameter values will be aggregated. When aggregating into a hexagon grid, this distance is used as the height to construct the hexagon polygons. When a template_cube parameter value is provided, this parameter is ignored and the distance interval value will be based on the template_cube value. | Linear Unit |

summary_fields [[Field, Statistic, Fill Empty Bins with],...] | The numeric field containing attribute values that will be used to calculate the specified statistic when aggregating into a space-time cube. Multiple statistic and field combinations can be specified. Null values in any of the fields specified will result in that feature being dropped from the output cube. If there are null values in the input features, it is recommended that you run the Fill Missing Values tool before creating a space-time cube. The available statistic types are as follows:

The available fill types are as follows:

Note:Null values in any of the summary field records will result in those features being excluded from the output cube. If there are null values in the Input Features parameter value, it is recommended that you run the Fill Missing Values tool first. If, after running the Fill Missing Values tool, there are still null values present and the count of points in each bin is part of your analysis strategy, you can create separate cubes, one for the count (without a Summary Fields parameter value) and one for the Summary Fields value. If the set of null values is different for each summary field, you can also create a separate cube for each summary field. | Value Table |

aggregation_shape_type (Optional) | Specifies the shape of the polygon mesh into which the input feature point data will be aggregated.

| String |

defined_polygon_locations (Optional) | The polygon features into which the input point data will be aggregated. These can represent county boundaries, police beats, or sales territories, for example. | Feature Layer |

location_id (Optional) | The numeric field containing the ID number for each unique location. | Field |

Code sample

The following Python window script demonstrates how to use the CreateSpaceTimeCube function.

import arcpy

arcpy.env.workspace = r"C:\STPM"

arcpy.stpm.CreateSpaceTimeCube(

"Homicides.shp", "Homicides.nc", "OccDate", "#", "3 Months", "End time",

"#", "3 Miles", [["Property", "MEDIAN", "SPACETIME"]], [["Age", "STD", "ZEROS"]],

"HEXAGON_GRID")The following stand-alone Python script demonstrates how to use the CreateSpaceTimeCube function.

# Create Space Time Cube of homicide incidents in a metropolitan area.

# Import system modules

import arcpy

# Set arcpy to overwrite existing output by default

arcpy.env.overwriteOutput = True

# Local variables...

workspace = r"C:\STPM"

# Set the current workspace (to avoid having to specify the full path to the

# feature classes each time).

arcpy.env.workspace = workspace

# Create Space Time Cube of homicide incident data with 3 months and 3 miles

# settings. Also aggregate the median of property loss, no date predicted by

# space-time neighbors. Also aggregate the standard deviation of the victim's

# age, fill the no-data with zeros.

# Process: Create Space Time Cube By Aggregating Points

cube = arcpy.stpm.CreateSpaceTimeCube(

"Homicides.shp", "Homicides.nc", "MyDate", "#", "3 Months", "End_time", "#",

"3 Miles", [["Property", "MEDIAN", "SPACETIME"]], [["Age", "STD", "ZEROS"]],

"HEXAGON_GRID")

# Create a polygon that defines where incidents are possible.

# Process: Minimum Bounding Geometry of homicide incident data

arcpy.management.MinimumBoundingGeometry(

"Homicides.shp", "bounding.shp", "CONVEX_HULL", "ALL", "#", "NO_MBG_FIELDS")

# Emerging Hot Spot Analysis of homicide incident cube using 5 Miles

# neighborhood distance and 2 neighborhood time step to detect hot spots.

# Process: Emerging Hot Spot Analysis

cube = arcpy.stpm.EmergingHotSpotAnalysis(

"Homicides.nc", "COUNT", "EHS_Homicides.shp", "5 Miles", 2, "bounding.shp")Environments

Special cases

- Output Coordinate System

The spatial reference associated with the Template Cube, when specified, will override the Output Coordinate System environment setting.

- Extent

The processing extent of the Template Cube, when specified, will override the environment setting processing extent.

Licensing information

- Basic: Yes

- Standard: Yes

- Advanced: Yes