Available with Image Analyst license.

The geospatial video capability in the ArcGIS Image Analyst extension allows you to include motion imagery and video and provides playback and geospatial analysis capabilities for metadata compliant videos. Geospatial video refers to the combination of a video stream and associated metadata into one video file, which makes the video geospatially aware. The sensor systems collect camera orientation, platform position and attitude, and other data and encode it into the video stream so that each video frame is associated with geopositional information.

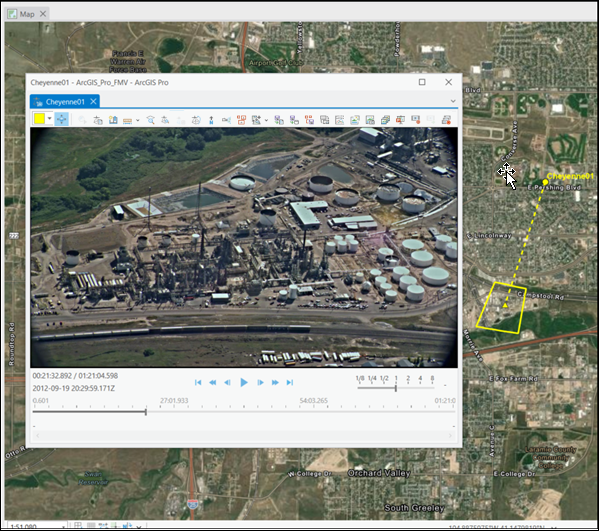

This geospatially enabled video data, along with the computational functionality of ArcGIS Pro, allows you to view and manipulate the video while being fully aware of the sensor dynamics and field of view (FOV), and display this information in the map view. It also allows you to analyze and edit feature data in either the video view or the map view, providing telestration capability.

Motion imagery tools in ArcGIS Pro use the metadata to seamlessly convert coordinates between the video image space and map space, similar to the way that the image coordinate system in image space analysis transforms still imagery. This conversion provides the foundation for interpreting video data in the full context of all other geospatial data and information in your GIS. For example, you can view the video frame footprint, frame center, and position of the imaging platform on the map view as the video plays, together with GIS layers such as buildings with IDs, geofences, and other pertinent information.

You can analyze video data in real time or forensically, immediately after a collection. It is well suited for situation awareness, since it is often the most current imagery available for a location. For example, if you are providing damage assessment after a natural disaster, you can use video to analyze the latest video data collected from a drone together with existing GIS data layers. Since the video footprint is visible on the map, you know exactly which buildings and infrastructure are visible in the video, and you can assess their condition, mark ground features in the video and map, bookmark their locations, and describe them in notes. Video is designed to quickly assess, analyze, and disseminate actionable information for timely decision support.

For more information about video in ArcGIS Pro, see Frequently Asked Questions.

Geospatial video features and benefits

Video uses crucial metadata, provides visual and analytical processing tools, and provides the following ArcGIS Pro capabilities to support project and mission-critical workflows:

- Video is fully integrated into ArcGIS Pro and uses the system architecture, data models, tools and capabilities, and sharing across ArcGIS.

- View and analyze live-stream videos and archived videos.

- Move the video player anywhere on your computer display, resize it, minimize it, and close it.

- The video player is linked to the map display, enabling the following:

- Display of the video footprint, sensor location, and field of view on the map.

- Any information collected in the video player is projected and displayed on the map together with your existing GIS data.

- Update the map to zoom to the video frame and follow videos across the map.

- Open and play several videos at the same time. Each video and its associated information are identified by a unique color when displayed on the map.

- Use intuitive playback controls, image and video clip capture, and analysis tools.

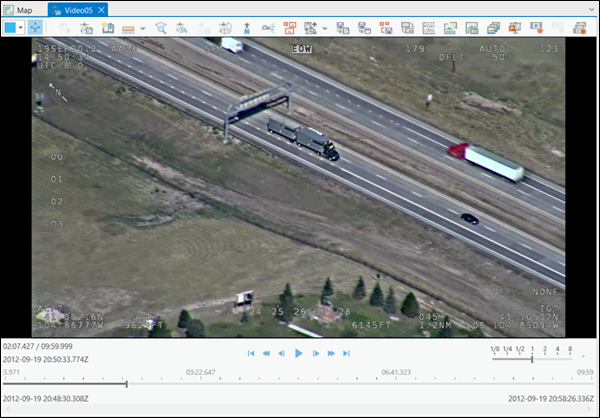

- Display metadata in real time.

- Create and manage bookmarks.

- Mark locations and phenomena of interest.

- Display is accelerated by GPU.

- Create video metadata

These functional capabilities form the basis for workflows that integrate spatial context into the video analysis process.

Uses for geospatial video

A primary use of video is for decision support in operational environments. Understanding who uses video and how it is collected and used provides important context for evaluating the functionality.

Industries that use geospatial video

Video can be used for monitoring remote, inaccessible, or dangerous locations. The types of organizations that use video include the following:

- Public safety and emergency management

- Defense

- Oil companies

- Local and federal governments

- Border patrol

- Utilities

- Natural resources professionals

The following are some of the types of applications for geospatial video:

- Emergency management and public safety

- Situation awareness

- Damage assessment

- Response

- Mitigation

- Security monitoring

- Asset management

- Corridor mapping, monitoring, and management

- Utility transmission, such as electric, gas, water, and other conveyance

- Transportation, such as roads, bridges, rail, highways, and air-land-sea ports

- Communication, such as cell towers and infrastructure

- Hydrology, such as natural and human-made infrastructure

- Riparian, such as stream buffers per reach, habitat, diversity mapping, and links

- Defense

- Intelligence, surveillance, and reconnaissance

- Mission support

- Counterintelligence

- Monitoring infrastructure

- Inventory

- New development

- Verifying as-built to planned

- Compliance and safety

Geospatial video data collection

The video application in ArcGIS Pro uses data collected from a variety of remote sensing platforms. The video data and metadata are collected concurrently, and the metadata is encoded in the video file either in real time onboard the sensor platform or later in a processing step called multiplexing.

Video data is collected from a variety of sensor platforms including the following:

- Drones (UAVs, UASs, and RPVs)

- Fixed-wing and helicopter aerial platforms

- Orbital spaceborne sensors

- Vehicle-mounted cameras

- Handheld mobile devices and cameras

- Stationary devices for persistent surveillance

The supported video formats, including high resolution 4K formats, are listed in the following table:

| Description | Extension |

|---|---|

AOMedia Video 1 File | .av1 |

Audio Video Interleaved | .avi |

H264 Video File¹ | .h264 |

H265 Video File¹ | .h265 |

HLS (Adaptive Bitrate (ABR)) | .m3u8 |

MOV file | .mov |

MPEG-2 Transport Stream | .ts |

MPEG-2 Program Stream | .ps |

M2TS Transport Stream | .m2ts |

MPEG File | .mpg |

MPEG-2 File | .mpg2 |

MPEG-2 File | .mp2 |

MPEG File | .mpeg |

MPEG-4 Movie | .mp4 |

MPEG-4 File | .mpg4 |

MPEG-Dash | .mpd |

VLC (mpeg2) | .mpeg2 |

VLC Media File (mpeg4) | .mpeg4 |

VLC Media File (vob) | .vob |

Windows Media Video File | .wmv |

¹ Requires multiplexing |

Video system requirements

The minimum, recommended, and optimal requirements for motion imagery in ArcGIS Pro are listed below. Minimum requirements support playback of a single video. Recommended requirements support the use of Full Motion Video tools during playback of a single video. Optimal requirements support the use of video tools during playback of multiple videos.

| Item | Supported and recommended |

|---|---|

CPU | A minimum of 4 cores at 2.6 GHz, with simultaneous multithreading, is required. Simultaneous multithreading, or hyperthreading, of CPUs typically features two threads per core. A multithreaded 2-core CPU will have four threads available for processing, while a multithreaded 6-core CPU will have 12 threads available for processing. It is recommended that you use 6 cores. For optimal performance, use 10 cores. |

GPU | For GPU type, an NVIDIA with CUDA Compute Capability 3.7 minimum, 6.1 or later is recommended. See the list of CUDA-enabled cards to determine the compute capability of a GPU. For the GPU driver, an NVIDIA driver version 531.61 or higher for better performance is recommended. For dedicated graphics memory, a minimum of 4 GB is required. It is recommended that you use 8GB or more, depending on number of concurrent active videos. |

Geospatial video capabilities

Video supports a variety of diverse operational environments, from time-critical situation awareness and emergency response using live-streaming video to analysis of hourly or daily archived videos for monitoring to forensic analysis of historical archived data. Each type of application requires intuitive visualization and analysis tools to quickly identify and record features or situations of interest and to disseminate extracted information to decision makers and stakeholders. Video capabilities for decision support are categorized into essential functionality described below.

Contextual video tabs

When you load a video in the map and select it in the Contents pane, the Standalone Video, Player, Metadata, and Object Tracking contextual tabs are displayed.

Standalone Video tab

When a video is loaded in the display, the video file is listed in the Contents pane, and the Standalone Video tab is enabled. The tools on the Standalone Video tab allow you to manage video data. This tab is organized into the Open, Bookmarks, Save, and Manage groups.

The tools on the Standalone Video tab are described in the Standalone Video tab topic.

Player tab

The Player tab is contextual and is enabled when you select a video in the Contents pane.

The Player tab contains tools for navigating the video, adding and managing graphics, and viewing video metadata. These tools are also available on the player.

Tool operations include zooming the associated map view to display the full video frame on the ground, displaying the sensor ground track and field of view of the video frame on the ground, and zooming and panning the map to follow the video on the ground. These tools allow you to have geographical context when working with video data.

Metadata tab

The Metadata tab contains tools to assist working with geospatial video metadata and includes generate metadata for a static sensor and will continue to expand in further releases.

Object Tracking tab

More information on the Object Tracking tab can be found in the Track objects in motion imagery documentation.

Video player

The video player includes standard video controls such as play, fast forward, rewind, step forward, step backward, jump to the beginning, or jump to the end of the video. You can zoom in to and roam a video while it is in play mode or in pause mode. The video player works with the map, global scenes, and local scenes. The Orient Scene Camera feature orients the scene view camera to the perspective of the video sensor and is only enabled for local and global scenes.

Additional tools include capturing, annotating, and saving video bookmarks; capturing single video frames as images; and exporting video clips.

Bookmarks

Creating video bookmarks is an important function for recording phenomena and features of interest when analyzing a video. You can collect video bookmarks in the different modes of playing a video, such as play, pause, fast-forward, and rewind. You can describe bookmarks in the Bookmark pane that opens when you collect a video bookmark. Bookmarks are collected and managed in the Bookmarks pane, available on the ArcGIS Pro Map tab in the Navigate group.

Add metadata to videos

You can only use videos that contain essential metadata in motion imagery. Professional-grade airborne video data collection systems generally collect the required metadata and encode it into the video file in real time. This data is readily input directly into the Full Motion Video application, either in live-streaming mode or from an archived file.

Consumer-grade video collection systems often produce separate video data and metadata files that must be combined into a single metadata compliant video file. This process is performed in the software and is referred to as multiplexing. Full Motion Video includes the Video Multiplexer tool, which encodes proper metadata at the proper location of the video file to produce a single metadata compliant video file. Each video and metadata file uses time stamps to synchronize the encoding of the proper metadata in the proper location in the video file.

The metadata is generated from appropriate sensors, such as GPS for x,y,z position, altimeter, and inertial measurement unit (IMU), or other data sources for camera orientation. The metadata file must be in comma-separated values (CSV) format.

The video metadata is used to compute the flight path of the video sensor, the video image frame center, and the footprint on the ground of the video image frames. Full Motion Video also supports the Motion Imagery Standards Board (MISB) metadata specifications. All the MISB parameters that are provided will be encoded into the final metadata compliant video.

One set of motion imagery parameters includes the map coordinates of the four corners of the video image frame projected to the ground. If these four corner map coordinates are provided, they will be used. Otherwise, the application will compute the video footprint from a subset of required parameters.

To compute and display the relative corner points of the video frame footprint as a frame outline on the map, the 12 metadata fields listed below are required:

- Precision Timestamp

- Sensor Latitude

- Sensor Longitude

- Sensor Ellipsoid Height, or Sensor True Altitude

- Platform Heading Angle

- Platform Pitch Angle

- Platform Roll Angle

- Sensor Relative Roll Angle

- Sensor Relative Elevation Angle

- Sensor Relative Azimuth Angle

- Sensor Horizontal Field of View

- Sensor Vertical Field of View

- Far Distance

When the metadata is complete and accurate, the application will calculate the video frame corners, and the size, shape, and position of the video frame outline, which can then be displayed on a map. These 13 fields comprise the minimum metadata required to compute the transform between video and map, to display the video footprint on the map, and to enable other functionality such as digitizing and marking on the video and the map.

Full Motion Videoy supports Video Moving Target Indicator (VMTI) data, based on object tracking methods in motion imagery. If VMTI data is recorded in a file separate from the associated video file, you can encode it into the video file using the Video Multiplexer tool.

The performance of the resulting multiplexed video file depends on the type and quality of the data contained in the metadata file, and how accurately the video data and metadata files are synchronized. If the metadata is not accurate or contains anomalies, these discrepancies will be encoded in the video and displayed on the map when the video is played. If the metadata.csv file only contains the UNIX Time Stamp, Sensor Latitude, and Sensor Longitude fields, the location of the sensor will be displayed on the map, but the footprint of the video frames will not be displayed, and some functionality such as digitizing features and measuring distance in the video will not be supported.

Metadata can be input into the Video_Multiplexer_Field_Mapping_Template.csv metadata template in the C:\Program Files\ArcGIS\Pro\Resources\MotionImagery folder. This template contains the 12 essential metadata fields needed to compute the video frame footprint.. If the metadata field names don’t match those needed for metadata template, they can be matched to the metadata template field names in the Video_Multiplexer_Field_Mapping_Template.csv template.

Ideally, the video data and metadata are time synchronous. If the time stamp linking the video and metadata are not accurately synchronized, the video footprint and sensor locations on the map will be offset from the view in the video player. If the time shift is observable and consistent, the multiplexer can adjust the timing of the metadata to match the video. The time shifts are applied by creating and inputting an optional .csv file into the Video Multiplexer tool that identifies the offset location in the video and its associated time discrepancy. Use the Video_Multiplexer_TimeShift_Template.csv template in the C:\Program Files\ArcGIS\Pro\Resources\MotionImagery folder to adjust the offsets. The template contains columns labeled elapsed time, which is the location in the video where the time shift occurs, and time shift, which is the amount of time offset. If the time shift between the video and metadata is inconsistent, you can list multiple positions in the video with the associated time shift in the template. This instructs the multiplexer where to place the appropriate metadata relative to the video timing.

Object tracking

Full Motion Video supports two object tracking methods: video moving target indication (VMTI) and deep learning-based tracking. VMTI methods track objects either manually or automatically and encode the position of the object within a specific video frame. Each object has an identifier (ID) and a bounding rectangle associated with the object, which is saved with the archived video. When the video is played, the information associated with the VMTI object is displayed. Full Motion Video requires that encoded video metadata adheres to the Motion Imagery Standards Board (MISB) Video Moving Target Indicator and Track Metadata standard (ST 0903).

Deep learning–based object tracking capability provides automated and computer-assisted tools to address a variety of situations when identifying and tracking objects in video imagery. It relies on deep learning technology to assist in object detection, extraction, and matching. Build a deep learning model to identify specific objects and classes of features, and use the suite of tools to identify, select, and track those objects of interest. You can digitize the centroids of an object's identification rectangle and save them as a point class in the project's geodatabase. You can then display the objects as the archived video plays.

Note:

You must install deep learning framework packages to perform deep learning–based object tracking. See the system requirements for more details.

Summary

The geospatial enabled characteristics of Full Motion Video are well suited for real-time or forensic analysis and situation awareness applications. The capability to project and display the video frame footprint and flight path on the map gives the video important context and allows bidirectional collection of features in the video player and the map.

These capabilities, together with annotated video bookmarks, allow an analyst to identify specific video frames or segments for further analysis and share this information with other stakeholders. By integrating FMV-compliant video with full GIS functionality, you can synthesize important contextual information to support informed decision making in operational environments.