Whenever we look at the world around us, it is very natural for us to organize, group, differentiate, and catalog what we see to help us make better sense of it; this type of mental classification process is fundamental to learning and comprehension. Similarly, to help you learn about and better comprehend your data, you can use the Spatially Constrained Multivariate Clustering tool. Given the number of clusters to create, it will look for a solution where all the features within each cluster are as similar as possible, and all the clusters themselves are as different as possible. Feature similarity is based on the set of attributes that you specify for the Analysis Fields parameter and may optionally incorporate constraints on the size of the clusters. The algorithm this tool uses employs a connectivity graph (minimum spanning tree) and a method called SKATER to find natural clusters in your data as well as evidence accumulation to evaluate cluster membership likelihood.

Tip:

Clustering, grouping, and classification techniques are some of the most widely used methods in machine learning. The Spatially Constrained Multivariate Clustering tool uses unsupervised machine learning methods to determine natural clustering in your data. These classification methods are considered unsupervised, as they do not require a set of preclassified features to guide or train on in order to determine the clustering of your data.

While hundreds of cluster analysis algorithms such as these exist, all of them are classified as NP-hard. This means that the only way to ensure that a solution will perfectly maximize both within-cluster similarities and between-cluster differences is to try every possible combination of the features you want to cluster. While this may be feasible with a handful of features, the problem quickly becomes intractable.

Not only is it intractable to ensure that you've found an optimal solution, it is also unrealistic to try to identify a clustering algorithm that will perform best for all possible data scenarios. Clusters come in all different shapes, sizes, and densities; attribute data can include a variety of ranges, symmetry, continuity, and measurement units. This explains why so many different cluster analysis algorithms have been developed over the past 50 years. It is most appropriate, therefore, to think of Spatially Constrained Multivariate Clustering as an exploratory tool that can help you learn more about underlying structures in your data.

Potential applications

Some of the ways that this tool might be applied are as follows:

- If you've collected data on animal sightings to better understand their territories, the Spatially Constrained Multivariate Clustering tool might be helpful. Understanding where and when salmon congregate at different life stages, for example, could assist with designing protected areas that may help ensure successful breeding.

- As an agronomist, you may want to classify different types of soils in your study area. Using Spatially Constrained Multivariate Clustering on the soil characteristics found for a series of samples can help you identify clusters of distinct, spatially contiguous soil types.

- Clustering customers by their buying patterns, demographic characteristics, and travel patterns may help you design an efficient marketing strategy for your company's products.

- Urban planners often need to divide cities into distinct neighborhoods to efficiently locate public facilities and promote local activism and community engagement. Using Spatially Constrained Multivariate Clustering on the physical and demographic characteristics of city blocks can help planners identify spatially contiguous areas of their city that have similar physical and demographic characteristics.

- Ecological Fallacy is a well-known problem for statistical inference whenever analysis is performed on aggregated data. Often, the aggregation scheme used for analysis has nothing to do with what you want to analyze. Census data, for example, is aggregated based on population distributions that may not be the best choice for analyzing wildfires. Partitioning the smallest aggregation units possible into homogeneous regions for a set of attributes that accurately relate to the analytic questions at hand is an effective method for reducing aggregation bias and avoiding Ecological Fallacy.

Inputs

This tool takes point or polygon Input Features, a path for the Output Features, one or more Analysis Fields, an integer value representing the Number of Clusters to create, and the type of Spatial Constraint that should be applied within the clustering algorithm. There are also a number of optional parameters that can be used to set Cluster Size Constraints either for a minimum or maximum number of features per cluster or a minimum or maximum attribute value sum per cluster, and an Output Table for Evaluating Optimal Number of Clusters.

Analysis fields

Select fields that are numeric, reflecting ratio, interval, or ordinal measurement systems. While nominal data can be represented using dummy (binary) variables, these generally do not work as well as other numeric variable types. For example, you could create a variable called Rural and assign 1 to each feature (each census tract, for example) if it is mostly rural and 0 if it is mostly urban. A better representation for this variable for use with Spatially Constrained Multivariate Clustering, however, would be the amount or proportion of rural acreage associated with each feature.

Note:

The values in Analysis Fields are standardized by the tool because variables with large variances (where data values are spread out around the mean) tend to have a larger influence on the clusters than variables with small variances. Standardization of the attribute values involves a z-transform, where the mean for all values is subtracted from each value and divided by the standard deviation for all values. Standardization puts all of the attributes on the same scale even when they are represented by very different types of numbers: rates (numbers from 0 to 1.0), population (with values larger than 1 million), and distances (kilometers, for example).

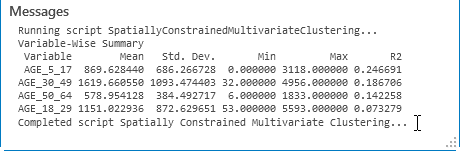

You should select variables that you think will distinguish one cluster of features from another. Suppose, for example, you are interested in clustering school districts by student performance on standardized achievement tests. You might select Analysis Fields that include overall test scores, results for particular subjects such as math or reading, the proportion of students meeting some minimum test score threshold, and so on. When you run the Spatially Constrained Multivariate Clustering tool, an R2 value is computed for each variable and reported in the messages window. In the summary below, for example, school districts are clustered based on student test scores, the percentage of adults in the area who didn't finish high school, per-student spending, and average student-to-teacher ratios. Notice that the TestScores variable has the highest R2 value. This indicates that this variable divides the school districts into clusters most effectively. The R2 value reflects how much of the variation in the original TestScores data was retained after the clustering process, so the larger the R2 value is for a particular variable, the better that variable is at discriminating among your features.

Dive-in:

R2 is computed as:

(TSS - ESS) / TSSwhere TSS is the total sum of squares and ESS is the explained sum of squares. TSS is calculated by squaring and then summing deviations from the global mean value for a variable. ESS is calculated the same way, except deviations are group by group: every value is subtracted from the mean value for the group it belongs to and is then squared and summed.

Cluster size constraints

The size of the clusters can be managed with the Cluster Size Constraints parameter. You can set minimum or maximum thresholds that each cluster must meet. The size constraints can be either the Number of Features that each cluster contains or the sum of an Attribute Value. For example, if you were clustering U.S. counties based on a set of economic variables, you could specify that each cluster has a minimum population of 5 million and a maximum population of 25 million. Alternatively, you could specify that each cluster must contain a minimum of 30 counties.

When a Maximum per Cluster constraint is specified, the algorithm starts with a single cluster and will split clusters that are spatially contiguous and similar in value. New clusters will be created until all cluster sizes are below the Maximum per Cluster value, taking into account all of the variables with each split.

SKATER forms clusters by spatially partitioning data that has similar values for features of interest. It is possible that the Cluster Size Constraints parameter may not be honored for all clusters. This occurs if defined cluster size constraints do not lend to optimal clusters definitions

SKATER also forms clusters by spatially partitioning data that has similar values for all of the Analysis Fields specified. It is possible that the Cluster Size Constraints may not be honored for all clusters. This can occur if both a maximum and minimum constraint were set to values close to each other, or because of the way the minimum spanning tree has been constructed based on spatial constraints. If this happens, the tool will finish, and the clusters that did not meet the specified requirements will be reported in the messages window.

Number of clusters

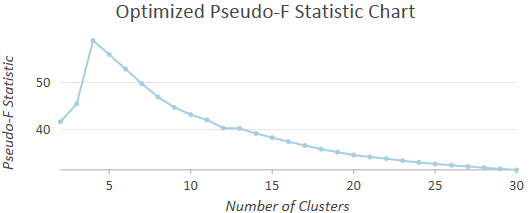

Sometimes you will know the number of clusters most appropriate to your question or problem. If you have five sales managers and want to assign each to their own contiguous region, for example, you would use 5 for the Number of Clusters parameter. In many cases, however, you won't have any criteria for selecting a specific number of clusters; instead, you just want the number that best distinguishes feature similarities and differences. To help you in this situation, you can leave the Number of Clusters parameter blank and let the Spatially Constrained Multivariate Clustering tool assess the effectiveness of dividing your features into 2, 3, 4, and up to 30 clusters. The clustering effectiveness is measured using the Calinski-Harabasz pseudo F-statistic, which is a ratio of between-cluster variance to within cluster variance. In other words, a ratio reflecting within-group similarity and between-group difference as follows:

Suppose you want to create four spatially contiguous clusters. In this case, the tool will create a minimum spanning tree reflecting both the spatial structure of your features and their associated analysis field values. The tool then determines the best place to cut the tree to create two separate clusters. Next, it determines which of the two resultant clusters should be divided to yield the best three-cluster solution. One of the two clusters will be divided, the other cluster remains intact. Finally, it determines which of the resultant three clusters should be divided to provide the best four-cluster solution. For each division, the best solution is the one that maximizes both within-cluster similarity and between-cluster difference. A cluster can no longer be divided (except arbitrarily) when the analysis field values for all the features within that cluster are identical. In the case where all resultant clusters have features within them that are identical, the Spatially Constrained Multivariate Clustering tool stops creating new clusters even if it has not yet reached the Number of Clusters value you specified. There is no basis for dividing a cluster when all of the Analysis Fields have identical values.

Spatial constraints

The Spatial Constraints parameter ensures that the resultant clusters will be spatially proximal. The Contiguity options are enabled for polygon feature classes and indicate that features can only be part of the same cluster if they share an edge (Contiguity edges only) or if they share either an edge or a vertex (Contiguity edges corners) with another member of the cluster. The polygon contiguity options are not good choices, however, if your dataset includes clusters of discontiguous polygons or polygons with no contiguous neighbors at all.

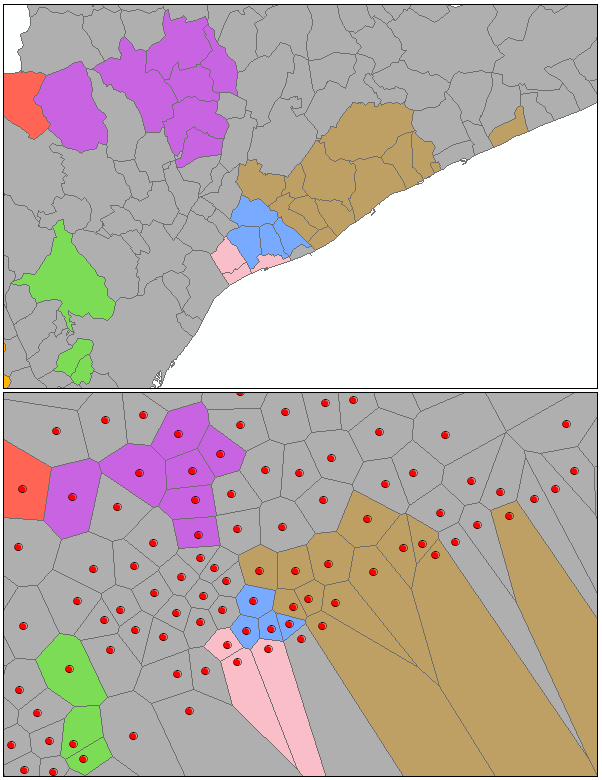

The Trimmed Delaunay triangulation option is appropriate for point or polygon features and ensures that a feature will only be included in a cluster if at least one other cluster member is a natural neighbor (Delaunay Triangulation). Conceptually, Delaunay Triangulation creates a nonoverlapping mesh of triangles from feature centroids. Each feature is a triangle node, and nodes that share edges are considered neighbors. These triangles are then clipped to a convex hull to ensure that features cannot be neighbors with any features outside of the convex hull. This option should not be used for datasets with coincident features. Also, because the Delaunay Triangulation method converts features to Thiessen polygons to determine neighbor relationships, especially with polygon features and sometimes with peripheral features in your dataset, the results from using this option may not always be what you expect. In the illustration below, notice that some of the grouped original polygons are not contiguous. When they are converted to Thiessen polygons, however, all the grouped features do, in fact, share an edge.

If you want the resultant clusters to be both spatially and temporally proximal, create a spatial weights matrix file (SWM) using the Generate Spatial Weights Matrix tool, and select Space time window for the Conceptualization of Spatial Relationships parameter. You can then specify the SWM file you created with the Generate Spatial Weights Matrix tool for the Weights Matrix File parameter when you run the Spatially Constrained Multivariate Clustering tool.

Note:

While the spatial relationships among your features are stored in an SWM file and used by the Spatially Constrained Multivariate Clustering tool to impose spatial constraints, there is no actual weighting involved in the grouping process. The SWM file is only used to keep track of which features can and cannot be included in the same cluster.

Minimum spanning tree

To limit cluster membership to contiguous or proximal features, the tool first constructs a connectivity graph representing the neighborhood relationships among features. From the connectivity graph, a minimum spanning tree is devised that summarizes both feature spatial relationships and feature data similarity. Features become nodes in the minimum spanning tree connected by weighted edges. The weight for each edge is proportional to the similarity of the objects it connects. After building the minimum spanning tree, a branch (edge) in the tree is pruned, creating two minimum spanning trees. The edge to be pruned is selected so that it minimizes dissimilarity in the resultant clusters, while avoiding (if possible) singletons (clusters with only one feature). At each iteration, one of the minimum spanning trees is divided by this pruning process until the Number of Clusters specified is obtained. The published method employed is called SKATER (Spatial "K"luster Analysis by Tree Edge Removal). While the branch that optimizes cluster similarity is selected for pruning at each iteration, there is no guarantee that the final result will be optimal.

Membership probabilities

The Permutations to Calculate Membership Probabilities parameter defines the number of permutations to perform for calculating cluster membership probability using evidence accumulation. Membership probabilities are included in the output feature class in the PROB field. A high membership probability indicates that the feature is similar and proximal to the cluster it is assigned to, and you can be confident the feature belongs in the cluster it was assigned. A low probability may indicate the feature is very different than the cluster it was assigned to by the SKATER algorithm or that the feature could be included in a different cluster if the Analysis Fields, Cluster Size Constraints, or Spatial Constraints parameter was changed in some way.

The number of permutations you specify defines the number of random spanning trees to be created for perturbing the spatial constraint of SKATER. The algorithm then solves for the Number of Clusters specified for each random spanning tree. Using the original clusters defined by SKATER, the permutation process keeps track of the frequency at which members of a cluster are clustered together under the changing spanning trees. Features that are prone to switching clusters due to slight changes to the spanning tree get small membership probabilities, whereas features that do not switch clusters get large membership probabilities.

Calculating these probabilities can take a significant time to run for larger datasets. It is recommended that you iterate and find the optimal number of clusters for your analysis first; then calculate probabilities for your analysis in a subsequent run. You can also improve performance by increasing the Parallel Processing Factor Environments setting to 50.

Outputs

A number of outputs are created by the Spatially Constrained Multivariate Clustering tool. The messages can be accessed from the Geoprocessing pane by hovering over the progress bar, clicking the tool progress button  , or expanding the messages section at the bottom of the Geoprocessing pane. You can also access the messages from a previous run of Spatially Constrained Multivariate Clustering via the Geoprocessing History.

, or expanding the messages section at the bottom of the Geoprocessing pane. You can also access the messages from a previous run of Spatially Constrained Multivariate Clustering via the Geoprocessing History.

The default output for the Spatially Constrained Multivariate Clustering tool is a new output feature class containing the fields used in the analysis plus a new integer field named CLUSTER_ID, identifying the group to which each feature belongs. This output feature class is added to the table of contents with a unique color rendering scheme applied to the CLUSTER_ID field.

Spatially Constrained Multivariate Clustering chart outputs

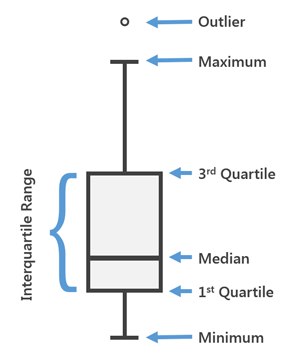

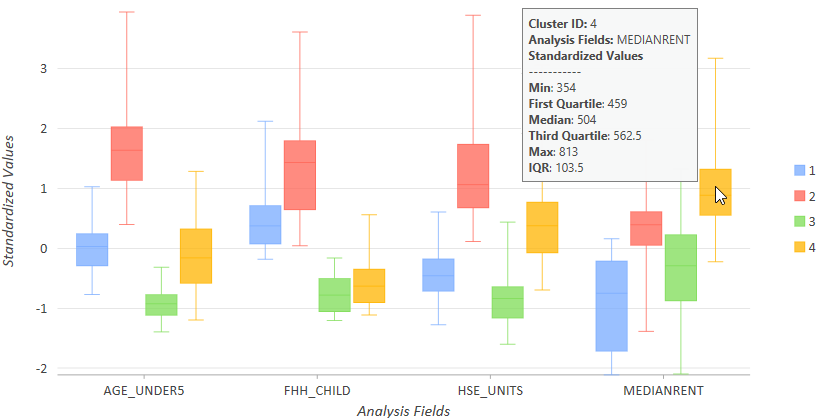

Multiple types of charts are created to summarize the clusters that were created. Box plots are used to show information about both the characteristics of each cluster as well as characteristics of each variable used in the analysis. The graphic below shows how to interpret box plots and their summary values for each Analysis Field and cluster created: minimum data value, 1st quartile, global median, 3rd quartile, maximum data value and data outliers (values smaller or larger than 1.5 times the interquartile range). Hover over the box plot on the chart to see these values as well as the interquartile range value. Any point marks falling outside the minimum or maximum (upper or lower whisker) represent data outliers.

Dive-in:

The interquartile range (IQR) is the 3rd quartile minus the 1st quartile. Low outliers would be values less than 1.5*IQR (Q1-1.5*IQR), and high outliers would be values greater than 1.5*IQR (Q3+1.5*IQR). Outliers appear in the box plots as a point symbol.

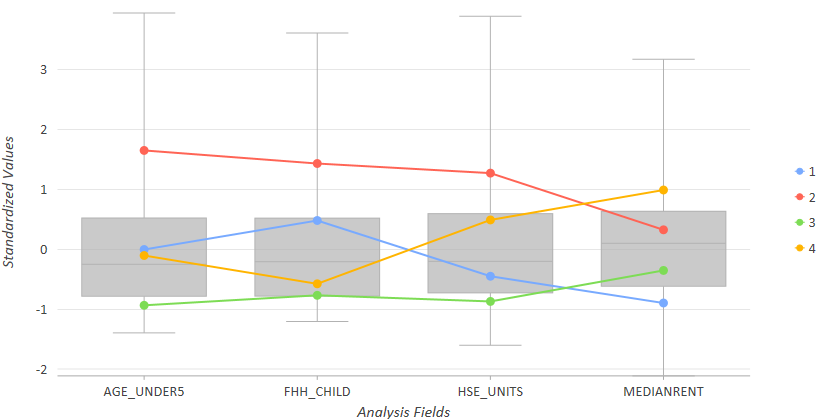

The default parallel box plot chart summarizes both the clusters and the variables within them. For example, the Spatially Constrained Multivariate Clustering tool was performed on census tracts to create four clusters. In the chart below, notice that cluster 2 (red) reflects tracts with about average rents, the highest values for female-headed households with children (FHH_CHILD), the highest values for number of housing units (HSE_UNITS), and the highest values for children under the age of 5. Cluster 2 (goldenrod) reflects tracts with the highest median rents, almost the lowest number of female-headed households with children and more than the average number of housing units. Cluster 3 (green) reflects tracts with the fewest female-headed households with children, the fewest children under the age of 5, fewest number of housing units and almost the lowest rent (not as low as cluster 1). Hover over each node of the mean lines to see the cluster's average value for each Analysis Field.

After inspecting the global summary of the analysis with the parallel box plots above, you can inspect each cluster's box plots for each variable by switching to Side-by-side in the Series tab of the Chart Properties pane. With this view of the data, you can see which group has the highest and lowest range of values within each variable. Box plots will be created for each cluster for each variable, so you can see how each cluster's values relate to the other clusters created. Hover over each variable's box plot to see the Minimum, Maximum, and Median values for each variable in each cluster. In the chart below, for example, you see that Cluster 4 (gold) has the highest values for the MEDIANRENT variable and contains tracts with a range of values from 354 to 813.

A bar chart is also created showing the number of features per cluster. Selecting each bar will also select that cluster's features in the map, which may be useful for further analysis.

When you leave the Number of Clusters parameter blank, the tool will evaluate the optimal number of clusters based on your data. Specifying a path for the Output Table for Evaluating Number Clusters causes a chart to be created showing the pseudo F-statistic values calculated. The highest peak on the graph is the largest F-statistic, indicating how many clusters will be most effective at distinguishing the features and variables you specified. In the chart below, the F-statistic associated with four groups is highest. Five groups, with a high pseudo F-statistic, would also be a good choice.

Best practices

While there is a tendency to want to include as many Analysis Fields as possible, the Spatially Constrained Multivariate Clustering tool works best when you start with a single variable and build. Results are easier to interpret with fewer analysis fields. It is also easier to determine which variables are the best discriminators when there are fewer fields.

In many scenarios, you will likely run the Spatially Constrained Multivariate Clustering tool a number of times before finding the optimal Number of Clusters, most effective Spatial Constraints, and the combination of Analysis Fields that best separate your features into clusters.

If the tool returns 30 as the optimal number of clusters, be sure to look at the chart of the F-statistics. Choosing the number of clusters and interpreting the F-statistic chart is an art form, and a lower number of clusters may be more appropriate for your analysis.

Additional Resources

Duque, J. C., R. Ramos, and J. Surinach. 2007. "Supervised Regionalization Methods: A Survey" in International Regional Science Review 30: 195–220.

Assuncao, R. M., M. C. Neves, G. Camara, and C. Da Costa Freitas. 2006. "Efficient Regionalisation Techniques for Socio-economic Geographical Units using Minimum Spanning Trees" in International Journal of Geographical Information Science 20 (7): 797–811.